First-class connections

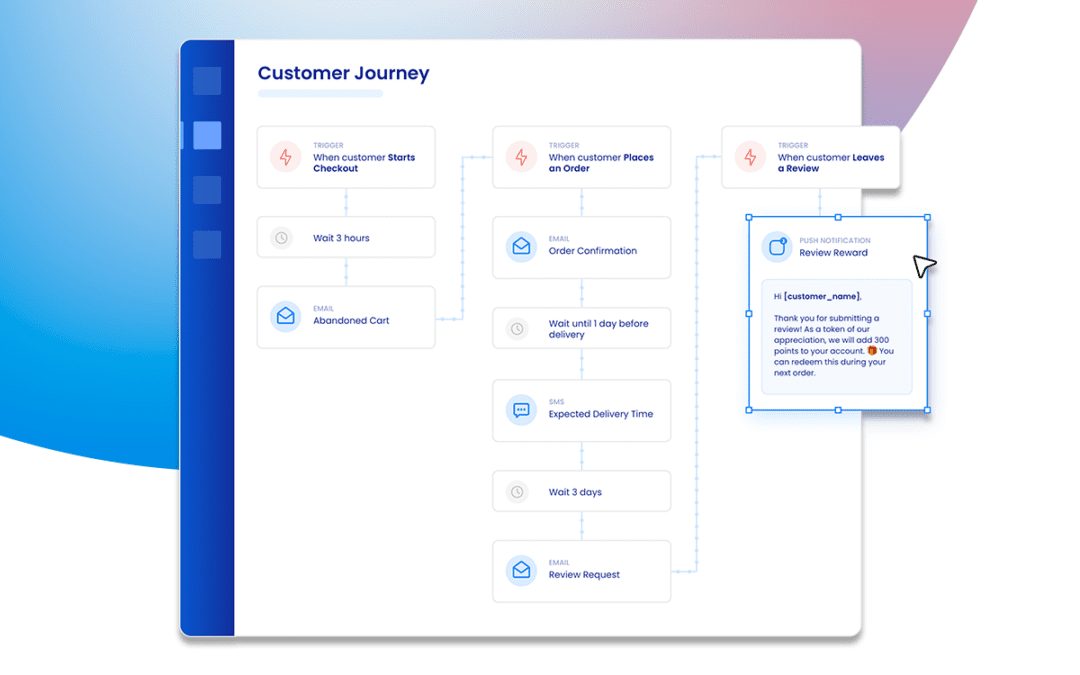

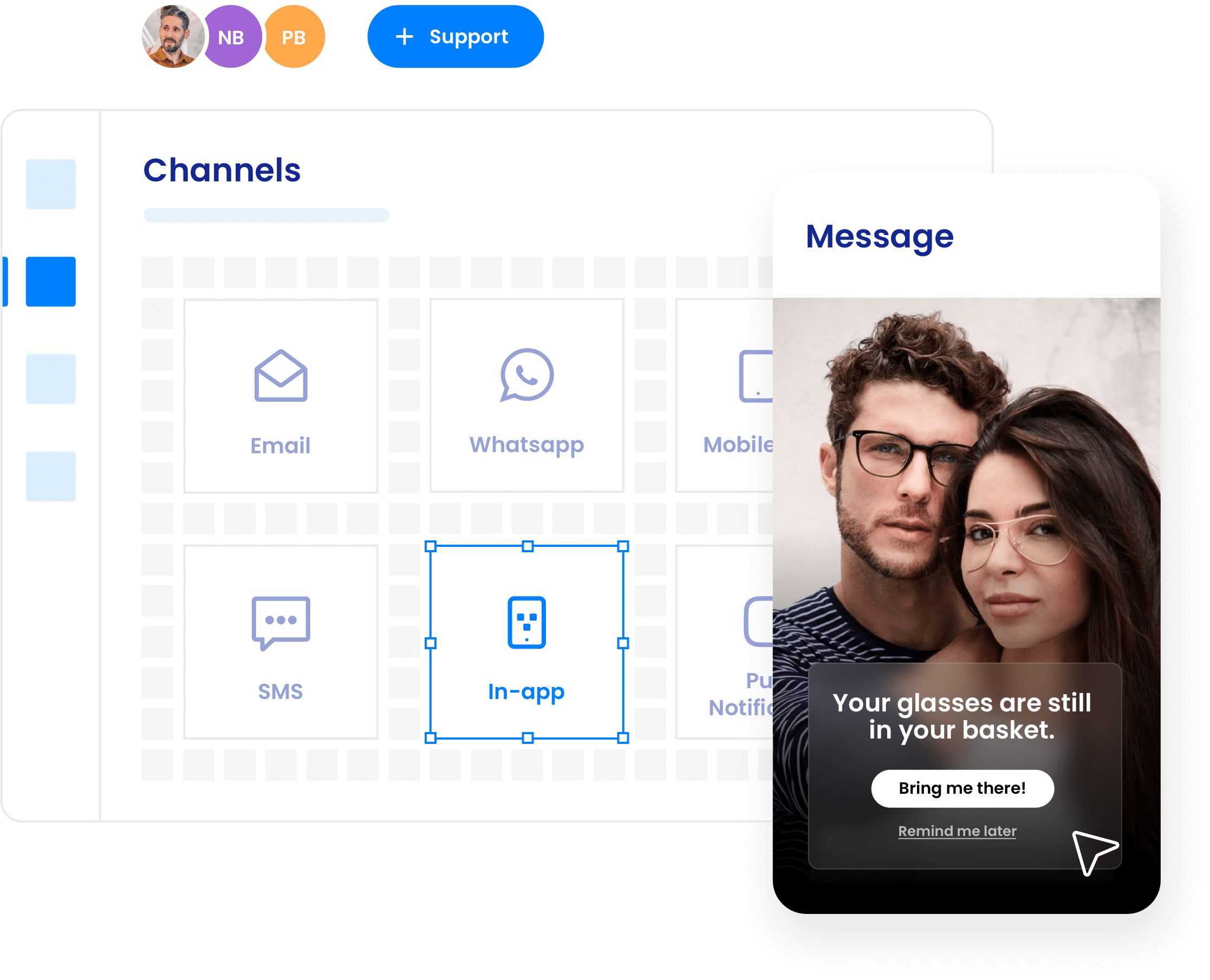

Intuitive and powerful, Deployteq allows you to easily engage with your customers based on their behavior and interactions.

Simple drag-and-drop functionality, instantaneous segmentation, and the ability to integrate with any secure data source, build fully connected customer experiences across any channel on this single customer view marketing automation platform.

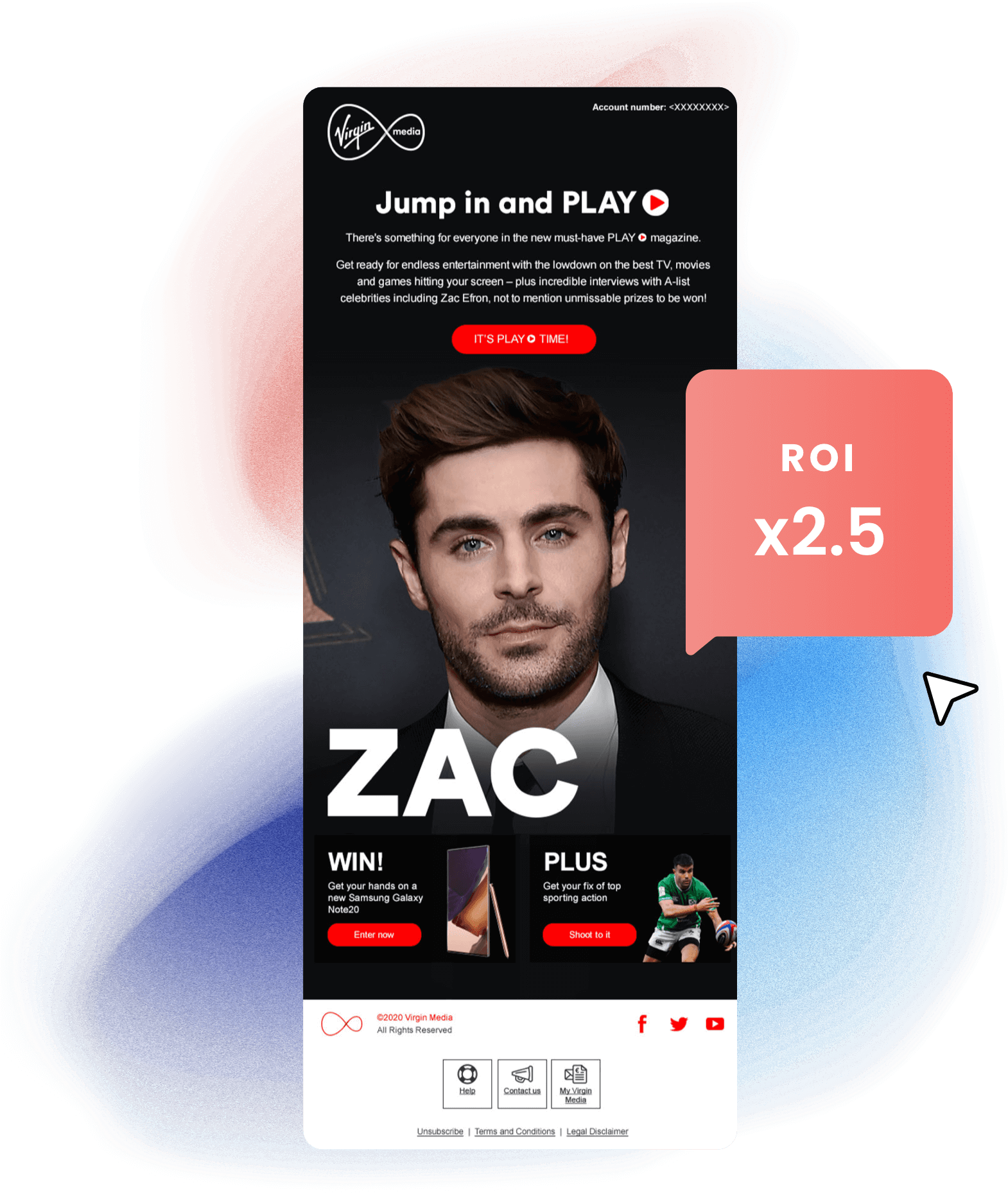

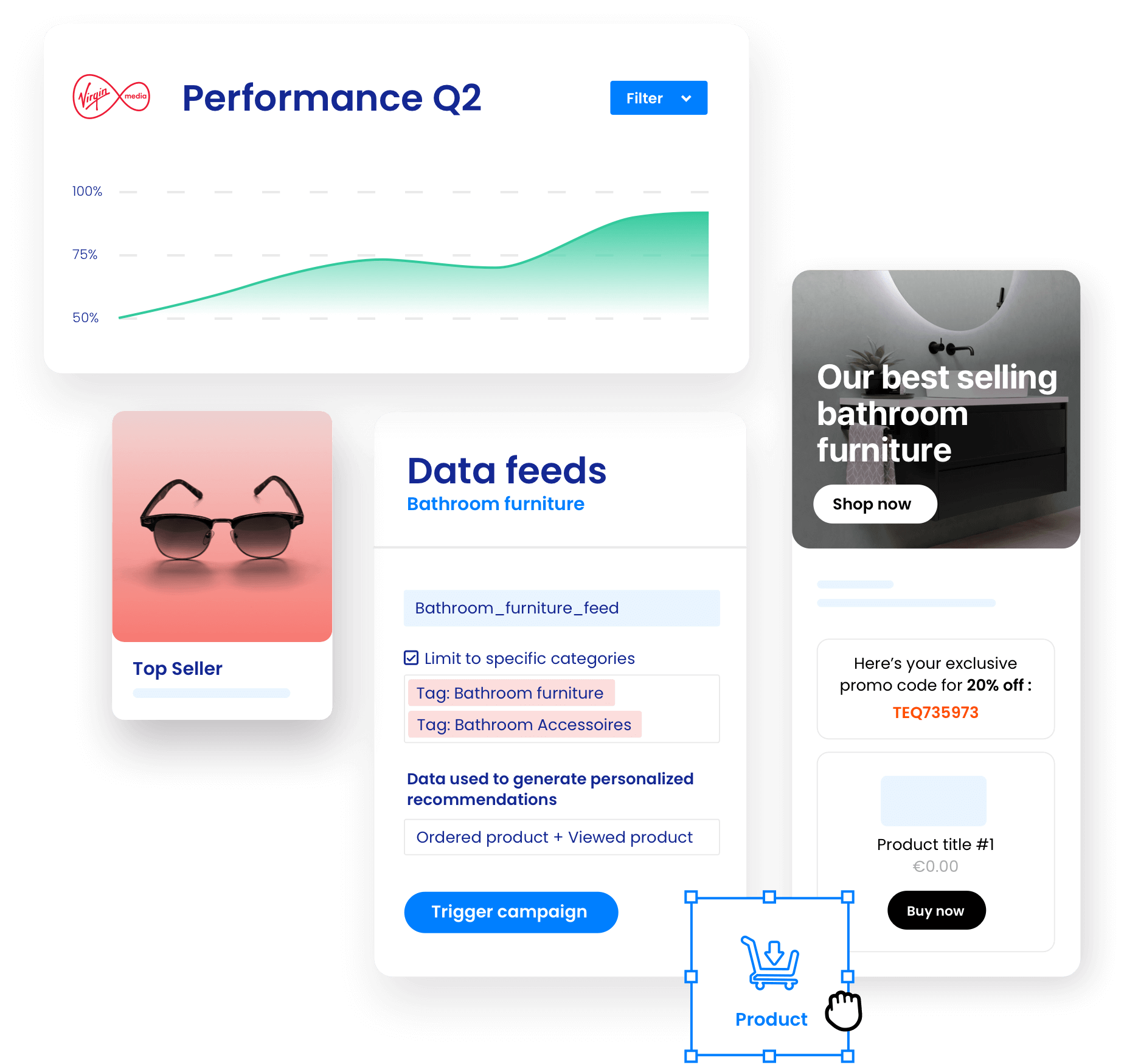

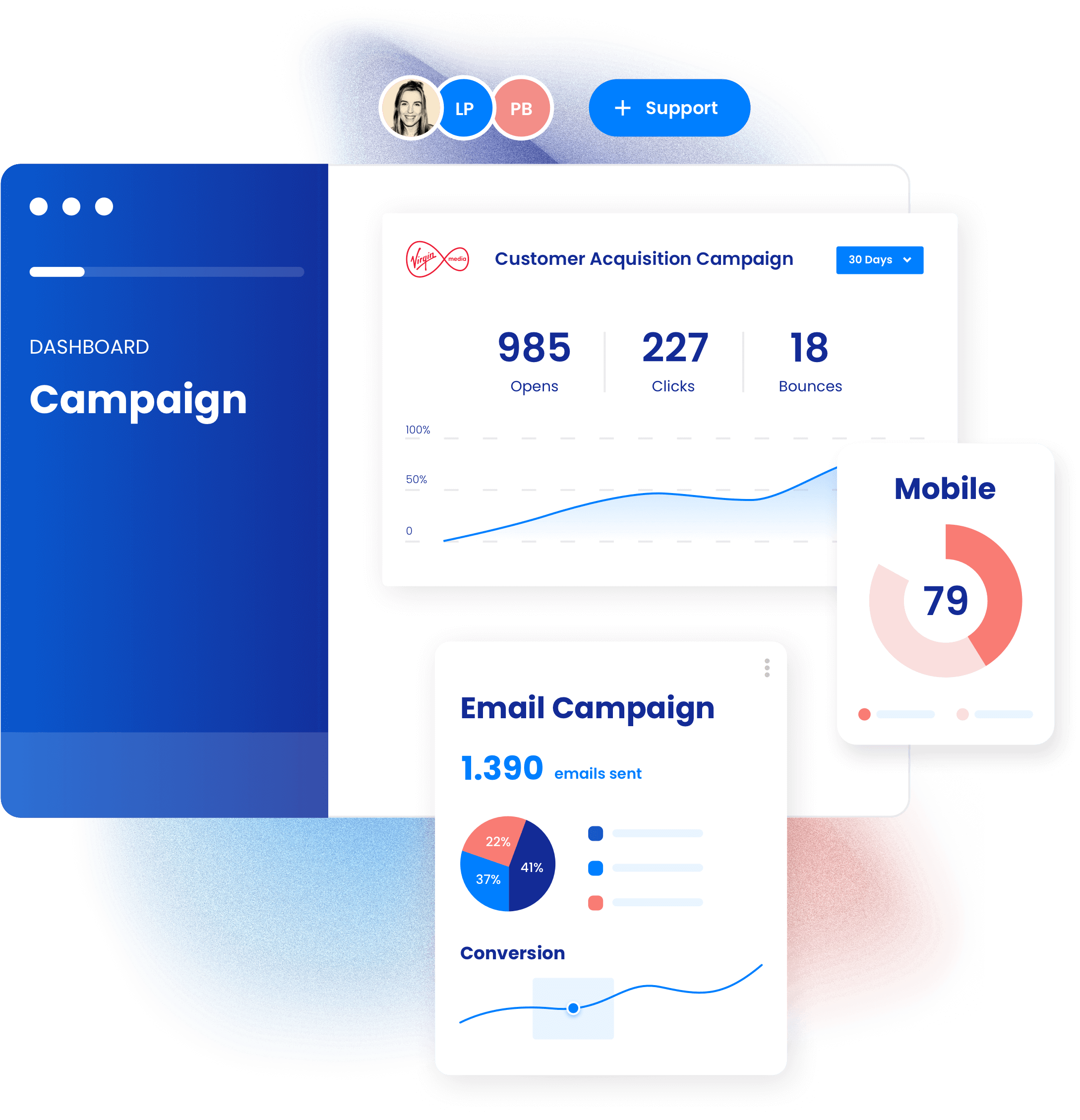

Virgin Media gained a 2.5x ROI with one single campaign

Across multiple channels based on users preferences, Virgin Media send millions of hyper-personalised messages through Deployteq. Want to know more?

Deployteq services

Let Deployteq’s award-winning team give your marketing a boost. Our award-winning team of performance marketers work across data, strategy, and design to optimise your campaigns. We can deliver everything in-house, work as an extension of your team and work with your partner agencies. We are here to support you 24/7.

Build dynamic segmentations

Create tailored audiences in minutes for the ultimate personalised campaigns using our intuitive and dynamic profile builder.

2.5x

increase in ROI

3.6 billion

messages every year

99.8%

deliverability rate

4700

daily users

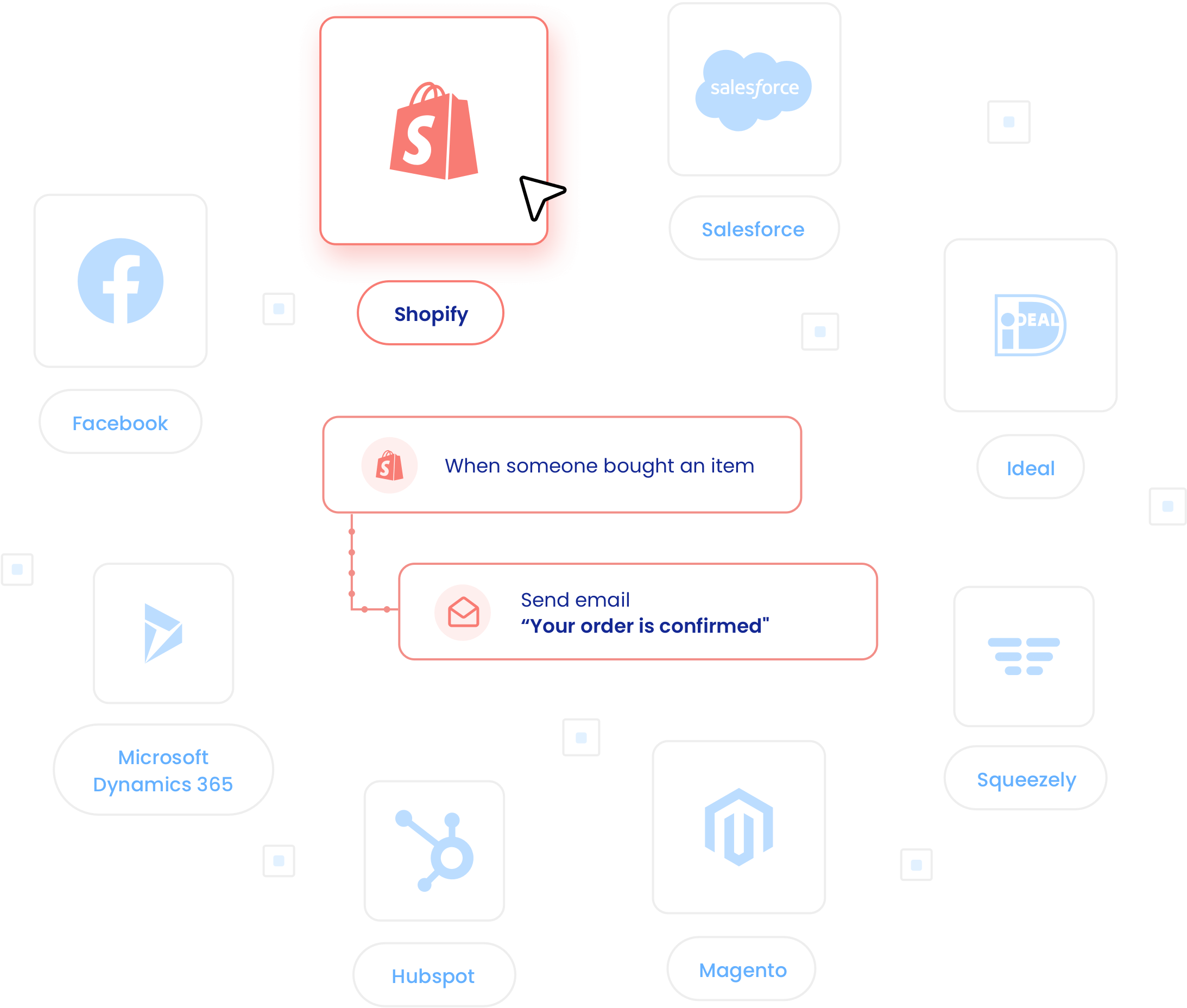

1. Connect your

tech anywhere

Utilise your integrations and streamline your connections through one platform, saving on additional investments.

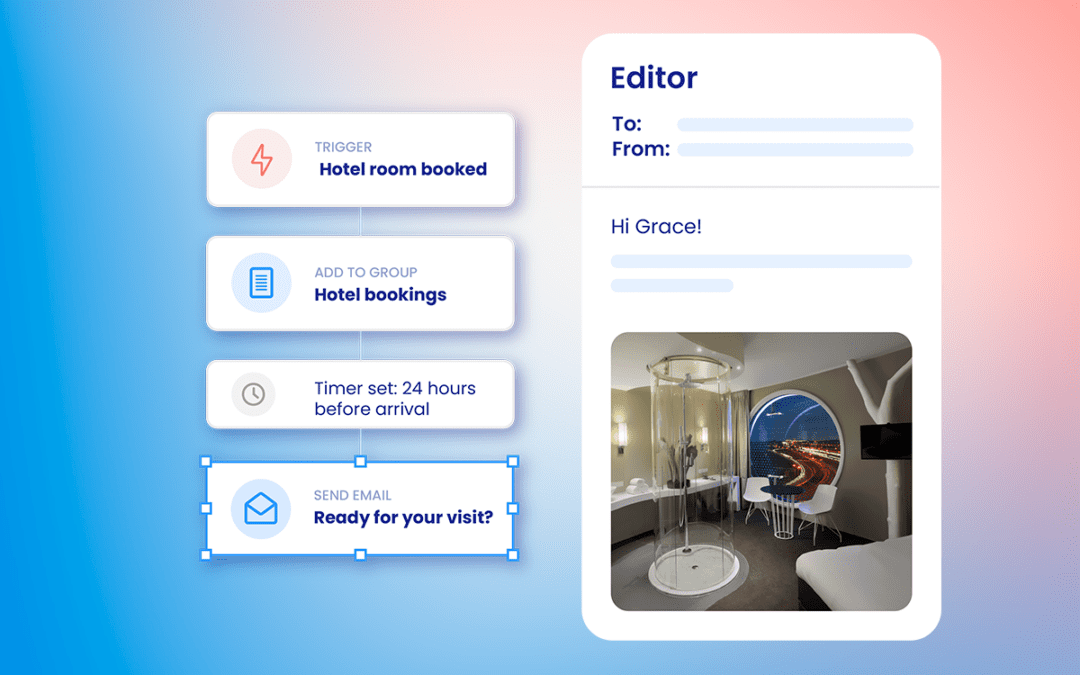

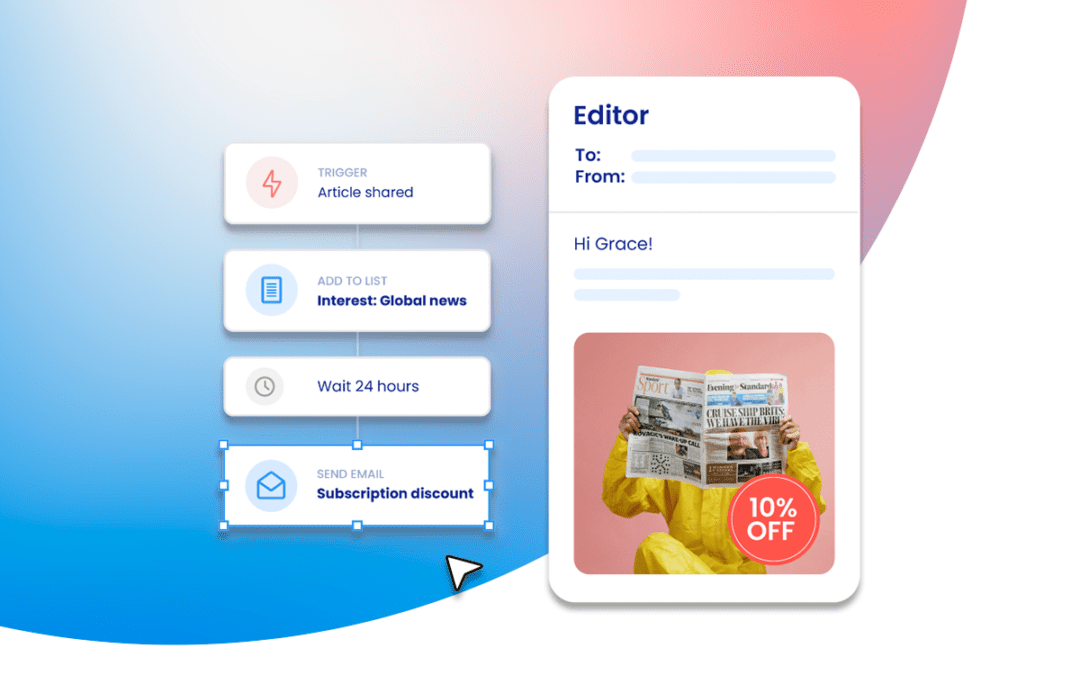

2. Enhance engagement

With automated

campaigns

While you’re focusing on marketing transformation, ensure your customers are getting the right message at the right time with automated campaigns.

3. Improve

and perform

Custom dashboards and deep insights mean your campaigns will grow and improve with your business.

Try it today

Deployteq combines deep data with

ultimate flexibility, meaning you send

the right message, to the right channel,

at exactly the right time – every time.

Want to know why

these brands love us?

Other Inspired Thinking Group solutions

Storyteq

Want Gartner award-winning

creative marketing technology?

Configteq

Want an award-winning

online experience?

Storyteq

Want Gartner award-winning

creative marketing technology?

Configteq

Want an award-winning

online experience?